What happened?

... is a valid question. The just published paper by Z. Yang et al. was not properly reviewed (and is neither the first nor will it be the last, no matter which journal and how high the impact factor). The striking difference to most other journals is: because of the transparency policy of PeerJ, we can try to track the faults and cracks in the system.

What distinguishes PeerJ is that the authors can make the peer review process public. Transparency in peer review is the only safeguard against peer review's many issues. And having got nice (toothless, I'd say) reviews, the authors did. Which is highly laudable.

Now, we will enter a tricky minefield, because it may strike one a bit racist. It's important to keep in mind that scientists are ordinary humans, and can be as provincial as anyone else, and are not infallible. Science can be a nasty business (and I'm very happy to be out of it), which may require at some point that you have to choose between your convictions (ethics) and getting ahead in your career.

We are furthermore all the product of our cultures, and only a few of us are lucky to be born into relative utopian societies — striving for equality etc., not having to exhaust ourselves getting ahead or lacking a few basic human rights from the cradle onwards. I doubt that my attitude to science – check everything twice, never believe blindly a printed word – would be the same if I'd not been born in Germany and enjoyed the freedom (and laxness) of the social-democratic German higher education, a pretty utopic idea implemented in the 70s; slowly deteriorating in the 80s when the conservatives took over but I finished just before we tried to emulate the capitalist Anglo-Saxon system which made things much worse for everyone involved. Hence, I can only boost a single Dipl. geol. and not three B.Sc and two M.Sc., but also got through (for a good time) with having my first first-author paper out* 14 years after I entered university and three years after I got my Dr. rer. nat., i.e. finished my Ph.D.

*After having lost a fight with the then editor of Syst. Biol., Rod Page, who didn't find it novel enough for his high impact journal; a decade later I could claim two papers in the "flagship" of phylogenetics.

|

| A network of world happiness (methodology/basics; more info/maps for the EU). The arrows indicate the position of the countries I was educated in and/or was paid to do my research (whatever I wanted) and that of the other science countries involved in this post. |

Weakness number 1: an unwitting editor with a preference for compatriots

The editor, from India and now working as a post-doc for the NIH in Bethesda (U.S.), is completely alien to the topic (currently researches breast cancer); not unusual, you take what you get, somebody has to do the job (and there may be not so few western colleagues who, by principle, will not handle or review any Chinese paper, for bad and good reasons). PeerJ – I co-authored a bunch of papers there and even the one lacking an editor from the exact field, palynology, were professionally handled (peer review history #1, #2, #3, #4) – got quite swapped during its no-APC-charges frenzy in the Darwin year. Maybe it expanded too fast, and now breast cancer researchers have to handle molecular botanical papers. Or, maybe, the authors picked the editor being a fellow Asian. There is a lot of cross-reviewing between the two (re-)emerging science powers India and China (note their position in the happiness network above) in any field of science. PeerJ requires you to suggest editors from their list and recommends suggesting five or more potential reviewers, while you can exclude others.

One of the reviewers who signed his review (which everyone should do, but few have the guts to do or fear the consequences) is from India as well, a bit of a surprise, scientifically. You will struggle hard to find an Indian study on Fagales. Although Fagales are ubiquitous, most of them are not found in the tropics (and those that are, are severely understudied). So far, I have not seen any data from Indian Fagales, not even from the very interesting oaks on the southern slopes of the Himalayas.

Picking reviewers from your own country irrespective of competence may be pretty common (Methods in Ecology & Evolution provide a nice DOs and DON'Ts for how to suggest reviewers). Since the peer review is usually confidential, there are little hard data.

My experience in the business was:

- Submit to a U.S. journal, you get a U.S.(-based) reviewer, largely from the same field, not rarely a competitor. Who has, of course, no competing interests to declare (other than trying to delay the publication of your paper). If everything else fails, they love to criticise the English corrected by your native-speaker co-author when a) not from the U.S., and b) not the first author of the paper (apparently convinced that a native English-speaking middle author doesn't bother reading and checking the submitted paper; we have a fitting saying in German: Wer von sich selbst auf andere schließt ...).

- Submit to a European, you get whoever worked a lot in that particular field/on that organism. A special case are low-impact journals: you won't be able to squeeze a review out of the (e.g. U.S.-based) expert, so you have to settle for a fellow European colleague from a neighbouring field. When I worked as (half-)editor for the Nordic Journal of Botany, we ended up reviewing papers ourselves because no-one of the fully fitting experts was willing to spend half-an-hour to read over a short, particulate paper from eastern Europe or the Orient.

- I personally never published in a Chinese journal but was told you have a much higher chance to get Chinese reviewers than anywhere else. Which can be a boon for Americans and Europeans, because the Chinese culture has never endorsed criticism of seniority. It may be tempting for Chinese authors for the same reason. But it also can be hack-and-slash for them, if the paper lands in the wrong hands. China is now the largest science nation (sheer number of researchers), possibly spending more on research than any other country in the world but the huge mass generates extreme competition (I imagine it is not that different from how they select their Olympic athletes: used to be pick one-drop a thousand and the future is Gattaca). Just check, how many Chinese first-authors act also as the corresponding author of their paper, this honour is reserved for the senior. A necessity because many of those first authors will be gone in a few years, but the senior stays (quite typical for, e.g., France as well).

- And Indian journals, well, Beall's List is offline, but this was where you could find a lot of them. They made predatory publishing an artform (and ironically, now do the proof-setting for all big, money-spilling, established science publishers in the Grand Old West, providing unbelievably professional service).

Weakness number 2: equally unwitting reviewers (tied to the editor)

This is the profile info on the non-anonymous reviewer.

|

| PeerJ's profile page for the non-anonymous reviewer. |

|

| His skills on ResearchGate (assuming it's really the same guy) |

What may have qualified him is his current institution: National Institutes of Health, Bethesda, United States. Rings a bell? Before it was: Indian Institute of Science, India, Bangalore (till 2014). The editor "completed [his] Ph.D. training ... in 2012, at Indian Institute of Science, Bangalore, India".

Both are big institutes but it's quite a co-incidence.

Here are the DON'Ts in the Methods of Ecology & Evolution post, some are good advise for editors, too.

- ... include anyone who has a conflict of interest

- ... just suggest your friends

- ... only recommend the biggest names in the field

- ... just suggest people who you know will agree with everything you say

- ... include people who won't provide a review on your list [I would add: can't provide ...]

The second reviewer stayed anonymous, so we can't dig deeper here. Judging from the intitial review: no expert on phylogenetics, biogeography or – God beware – Betulaceae. Or, somebody with personal reasons to let the paper pass. These can include but are not limited to:

- Being a(nother) friend of the editor, or the authors (No. 2 on Methods in Ecology & Evolution's DON'Ts list, which strike one as common sense).

- A proud Chinese waving through the work of other Chinese to fight scientific imperialism – which is something that indeed may exist; even as Europeans hailing from famed science nations, we faced some nasty reviews colleagues beyond the seas.

- Fear the peer review transparency. What if you had no time and didn't really bother to do more than read-over the text once despite having all the needed knowledge? What if you write something stupid in your review? The authors decide to disclose the peer review process. How shameful would that be! Much less shameful to put your name on a badly conceived paper, at least then it's another bullet point on your all-deciding publication list. Publish or perish, doesn't help to be picky.

Likely just the tip of the iceberg

I doubt that such scientific amateurism and provincialism, having old buddies or former students reviewing papers you handle even so their area of research is nearly as far as yours from the content of the paper, is something unique to PeerJ.

Lack of basic scientific standards may be a bit more common in Asia than the rest of the World (science mirrors cultures, criticising is considered a hostile act). I found many recent Indian, Chinese or Sino-American co-productions devoid of use. Data are produced, fed to all possible programmes (the compilation and analysis of big data is a no-brainer these days), with trivial, invalid or just odd results or conclusions, especially when it comes to historical plant biogeography. Two recent examples:

- How not to make a biogeographic study

- Trivial but illogical – reconstruction the biogeographic history of the Loranthaceae (again)

This one, for instance, was published 2013 in PLoS ONE. Here are the principal findings.

The species discrimination ability of ITS ranged from 24.4 percent to 74.3 percent and that of trnH-psbA was 25.6 percent to 67.7 percent, depending upon the data set and the method used. ... Species resolution by ITS2 and rbcL ranged from 9.0 percent to 48.7 percent and 13.2 percent to 43.6 percent, respectively. Further, we observed that the NCBI nucleotide

database is poorly represented by the sequences of barcode loci studied here for tree species.

And now includes dozens of mislabelled sequences (for those familiar with barcoding, give it a read). The promise of barcoding is that we can get rid of taxonomists, but it may be a good idea to hire one before making a pilot study and adding new species to the gene banks represented only by erroneous data (see my comments to the paper). And despite having worked (at some point) with non-trivial ITS data himself, the editor (a very clever Canadian*) may have been too reliant on the reviews. Or lacked the time to handle this properly.*I met him 2007 at a conference in Uppsala and he talked to me since we were the only two presenters in addition to the keynote who actually showed phylogenetic networks in a symposium dedicated to them. The title of my talk was: "ITS evolution in Platanus: Homoeologues, pseudogenes, and ancient hybridisation"; six years is a long time in science and the chosen reviewers had to be barcoders, of course.

Hard to find reviewers for Fagales complete plastome papers

A more recent example I crossed, is this 2018 paper in Frontier in Plants Science by Y. Yang et al. (entirely different set of authors than Z. Yang et al.) providing an equally enhanced phylogeny based on complete oak plastomes. Frontiers is originally a Swiss open access publisher with great aims. However, some of its outlets drifted into the growing grey zone between predatory and proper publishing (see this 2016-post). FiPS appears to be largely a Chinese-run journal (in my fields) and you can find people in the Grand Old West that consider it already a dump for papers rejected elsewhere. Being an alternative to PLoS ONE, where quality varies extensively from field to field and editor to editor, and Springer-Nature (SN)'s Scientific Reports*, currently the best receptacle for a quick and safe dump. I never felt the need to cite a FiPS article because the few in my field that I crossed my path all lacked scientific vigour. For the same reason, I never was tempted to submit a paper there; and despite my preference for some of their publishing policies (open access, non-anonymous reviews, comment option). Also, they are much more expensive than PeerJ; for those with measurable impact like FiPS, you are charged nearly 3000 US$. About the price you pay for, e.g., SN's BMC journals, which have a better reputation and even higher impact factor.

* Scientific Reports tried to recruit me as editor when just launched, with the promise that as an editor, I don't get paid (of course) but get an APC bonus and would have had the right to publish papers without bothering reviewers. Strangely, I never got an answer to my question if that would not invite scientific corruption (PS: Note how often the journal pops up in the Loop profiles of FiPS authors, editors, and reviewers).

Like the PeerJ Betulaceae paper, this oak plastome phylogeny paper was apparently not scrutinised by anyone familiar with the studied organism or the data situation. But handled and reviewed by people with some experience in the general field(s). Frontiers lists the editor and the reviewers.

- The editor is a Chinese geneticist (so from the proper field) at Saint Mary's University, Halifax, Canada and, in a former life, 3-times co-author (2007, 2008a, 2008b; I love Frontiers' Loop-service) of the senior-corresponding author of the paper, hailing from the Northwest University in Xi'an (the senior author's loop publication list reveals him as a prototypical senior/ last author, he makes the Rubel roll, as we Germans say, and leaves the actual work to others).

- One reviewer is from Belgium: a molecular data-analyst who worked with data from bacteria and ascomycetes, likely familiar with the technique (which doesn't require much review at all: once a reference plastome is set for a genus, annotating the second, third, etc. is merely a technical, computer-assisted exercise) but ignorant of the data situation (like probably everyone that never worked on oaks).

- The other was a Chinese from the Sun Yat-sen University in Guangzhou (#259 in the "QS Global Ranking") with some focus on Podocarpus, a conifer, who reviewed another paper for the same editor dealing with a Crassulaceae (Rhodiola fastigiata), and reviewed one (for a different Chinese editor) on another Fagales (Chinese walnuts).

I know from my own experience that there are very opinionated "de-facto" oak experts (to give one example; there's another even older specimen, you don't want as reviewer ... got 'em all and hard) that will not be able to provide a proper, unbiased and constructive review. But it probably wouldn't have hurt to ask one of the many younger Americans or young and old Europeans that worked not only on oaks (like me) but have experience with phylogenomics. After all, FiPS is "the world's most-cited Plant Science journal".

As mentioned, one plus of Frontiers, shared with PLoS and PeerJ, is the comment option. So, I did make a post-review (comments are restricted to 4000 words, so I left aside minor errors; scroll to the bottom of the article's page).

Why full peer transparency is obligatory

Science is very fragmented and hazardously specialised. Peer review is like democracy: it is faulty, but it is the best alternative we have to avoid too much bad science is published.

No matter how stringent the system, dubious results and reconstructions will pop up in peer reviewed journals because of

- unwitting or time-ridden editors,

- poorly chosen/ acting reviewers,

- the general fear of openly criticising the work of others (no-one handing in anonymous reviews can point the finger on those reviewing for Frontiers!) and

- last but not least, widespread scientific chauvinism.

There's no way to avoid the pitfalls entirely, editors have the worst job there is in science and also reviewers usually don't have any benefits from doing good work, or face consequences, when delivering shit.

But: Good reviewers and editors (FiPS boots more than 5000) should get their proper credit!

Publishing the names of the editors and reviewers (as done by Frontiers) and their reports (as done by PeerJ) is a huge leap forward. Science needs full transparency.

A critical or conspicious reader can then easily find out why peer review failed or misfired for a particular paper. When the peer review is made transparent, you can quickly make yourself an idea about self-promoting circles and, just by the names, judge how reliable the published paper can possibly be.

And, as author, you know where to go when trying to publish something quick and smooth.

For instance, I bet that this upcoming review on a high-catch topic by a German professor* will be quite amusing. The profile of the editor lists 26 publications, and boosted one "Edited Research Topic" reminiscent of a recently (2018) published book published by Wiley's involving leading figures of the German-centred NECLIME science syndicate (with many ties to China; and to which the author has professional ties). The profile of reviewer 1, lists three; profile reviewer 2 lists six publications. All handlers are Chinese of course but that fits geographically with the topic of the paper (Tibetan Plateau).

|

| Reviewing a review – a good practise for newbies. |

The book includes a chapter co-authored by the German with pretty much the same content (judging from the abstract) but, intriguingly, regarding the topic of this post, scientific provincialism underming the peer review process, includes only a single chapter authored by three Chinese researchers (two more are back-end authors of other chapters boosting five and 11 co-writers, quite a lot for a book). FiPS seems to be a good way to re-vitalise a bit your publication list (e.g. by having your personalised "edited paper collection") with a first-authorship (you don't have the time to write papers as active German professor). The author's Loop profile is, however, private but there's still GoogleScholar.

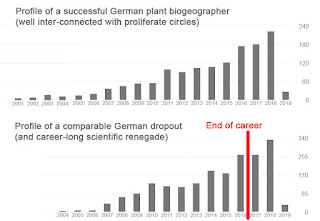

|

| Networking and being a fixed-employed professor pays off — eventually. Just give it a decade or two more. |

* We "co-authored" a paper in 2014, very tough during review (What I was not allowed to show #2). A swift experience that left me wondering how so many excel in a field, plant biogeography (also the topic of the upcoming review), while having so little idea about the very basics: the assumptions and data used.

PeerJ and Frontiers give a good example

It cannot be stressed strongly enough that despite all problems that surface the deeper we look into the abyss, PeerJ and Frontiers show how it should be done.

Frontiers' Loop service is simply brilliant for tracing connections that may unduly affect the peer-review, something largely impossible for the grand journals such as Science and Nature and their many, partly very ugly little children practising and cultivating peer review obscurity.

|

| The standard peer review process – you rarely see what is put in, and have to live with what comes out of the black-box. |

PeerJ's peer review transparency policy should be a must for all journals that claim to be peer-reviewed. Regarding the option for staying anonymous: if – as an expert in at least some aspect touched by a paper – I can't put my name under my judgement (for whatever reason), I should not judge. And there is no shame to write something like this:

Regarding the genetic part of the paper, I am not competent to give any advise. But I like the results. — Quote from a signed, elaborate (couple of pages long) review of our 2007 paper on Acer sect. Acer, submitted (straight-away) to a low-impact, traditional European journal, and reviewed by a truly great, now, unfortunately, dead, German palaeobotanist (some provincialism is unavoidable in our fragmeneted scientific world, he was the only other one, still living, who knew his fossil maples). Only one review, the editor couldn't get a second despite giving it a considerable amount of time (about half a year).

How can we criticise the Trumps of this world, how they undermine facts and science, and then argue for peer review confidentiality and anonymity?Frontiers and PeerJ (more elaborate) have options for post-publication review. When running across a flawed and poorly reviewed/ handled paper, why not point the error out so the authors can do better next time? Without having to rely exclusively on the botanical-genetic expertise of a microbiologist or medic. Having experienced some severe delays in the publication of my papers (and I'm only, a bit, ashamed for the one published in Nature 2007), I cannot blame that anyone tries to use scientific provincialism and known science dump sites to sneak past the usual beasts lurking in the Forest of Review. Just to avoid this:

|

| From a fun post: How not to be Reviewer #2. |

Further reading

Check out my other posts flagged as #FightTheFog.

Excellent writing as usual. Btw. Regarding utopian ideals. I just spent two weeks in Japan with my wife. She is Japanese American and her 80 year old mother who lives in Tokyo had to be admitted into a nursing home (ultimately). It was interesting to see how much help my mother in law has been receiving. Initial in home doctors visits, nurses, caretakers, wheelchairs, beds. And everyone was extremely friendly and helpful. I think the Japanese society is the most cooperative I've seen so far and definitely a step closer to utopian ideals than most western societies.

ReplyDeleteThere's indeed some analogy to science.

DeleteThe Japanese scientists I met (from different fields) all were very friendly, generally relaxed and open, even regarding unpublished results (something very rare in the U.S., China and, increasingly, Europe).

Recently, an Australian working in Japan told me that he doesn't ever plan to go back, also because they don't need to publish to not perish. Something that reminded me of Germany before internationalisation. The most knowledgeable researchers at the university were often mid-level employees, who published little and when, then typically in classic, low-impact journals. But all those positions have been converted in 3+3-year post-docs. This increased scientific output. Being a temp, the post-doc has a personal interest in writing as many papers as possible using the data of the Master students and Ph.D.s, and the professor (who naturally has to be co-author on all these papers, there are luckily only very few left who insist being first-author) is free to maintain the networks.

But it also increased competition and, subsequently, uncooperativeness, even within research groups or clusters. Instead of researchers who can afford to indulge in one speciality, and then offering everyone their particular knowledge when needed, science wants "Fahrradfahrer, die nach oben buckeln und nach unten treten" (cyclers who bend to those above and trample on those below).